Machine Learning Interview Questions

Top 100+ Machine Learning Interview Questions You Must Know in 2025

In recent years, Machine Learning Interview Questions for Freshers or Experienced have evolved to demand applicants to have rigorous knowledge of the domain. This is because the potential candidates are evaluated on different aspects like technical and programming skills, ML concepts, and much more. In such a scenario, our meticulous collection of 100 Machine Learning Interview Questions & Answers serves as your one-stop destination, preparing you well for your upcoming job interviews in this dynamic field. This set of Machine Learning Interview Questions for Beginners and Professionals thereby covers a wide range of topics, from basic to advanced concepts.

How Do Advanced Machine Learning Interview Questions for Experienced and Beginners Prove To Be Helpful?

The 100 Machine Learning Interview Questions & Answers empower candidates to develop their technical and programming skills, as well as their understanding of fundamental concepts and approaches. We primarily aim to focus on real-life situations and the most popular ML Interview Questions & Answers that are commonly asked at job interviews of reputed companies such as Microsoft, Amazon, HCL, Google, etc.

Why Is It Important To Be Well-Versed With Machine Learning Interview Questions And Answers?

Businesses are attempting to make information and services readily available to individuals by implementing cutting-edge technology such as AI and ML. These technologies are increasingly being used in industrial sectors such as banking, finance, retail, manufacturing, healthcare, and others. Due to this, in-demand organizational roles such as data scientists, artificial intelligence engineers, machine learning engineers, and data analysts are embracing AI. Therefore, if you want to apply for these jobs, you need to be aware of the Top Machine Learning Interview Questions & Answers that recruiters and hiring managers may ask. Hence, these key ML Interview Questions & Answers have been prepared for you so that you can simplify your learning process and excel in the interviews ahead.

Therefore, whether you are an experienced developer or a beginner in the world of programming, this complete set of Advanced Machine Learning Interview Questions for Experienced and Beginners equips you to tackle your upcoming interviews efficiently. Additionally, these Machine Learning Coding Interview Questions are beneficial for individuals who are willing to do a quick revision of their ML concepts. However, it should be noted that these Machine Learning Interview Questions And Answers are only intended to act as a general guide to the types of questions that may be asked during interviews.

Hence, familiarizing yourself with the basic Machine Learning Interview Questions for Experienced and Beginners helps you land jobs in the following positions:

- Machine Learning Engineer

- Robotics Engineer

- Natural Language Processing Scientist

- Software Developer

- Data Scientist

- Cybersecurity Analyst

- Artificial Intelligence Engineer and so on.

So, what are you holding out for? Join us on this journey to discover not only the commonly asked Machine Learning Interview Questions for Beginners or Advanced but also to gain a full grasp of the language’s potential in the rapidly developing tech sector.

MACHINE LEARNING INTERVIEW QUESTIONS: BEGINNER LEVEL

1. What is machine learning?

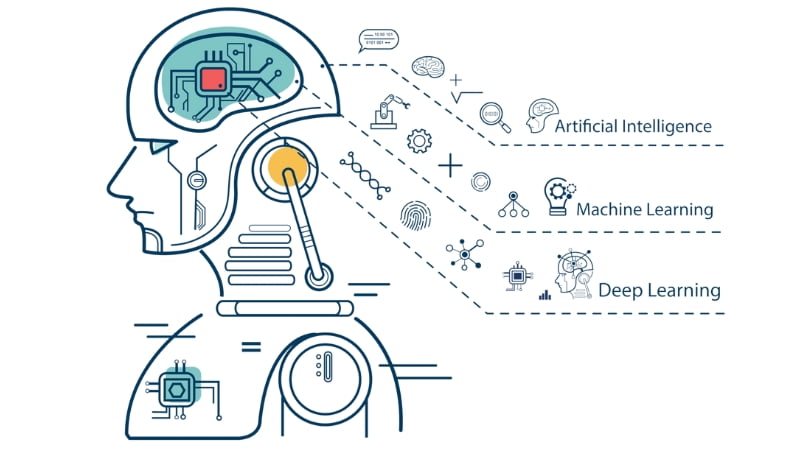

Machine learning is a subset of artificial intelligence that focuses on developing algorithms and models that allow computers to learn and make predictions or decisions without being explicitly programmed.

2. What are the different types of machine learning?

There are three main types: supervised learning, unsupervised learning, and reinforcement learning.

3. Explain supervised learning.

Supervised learning involves training a model using labeled data, where the algorithm learns to map input data to corresponding output labels.

4. What is unsupervised learning?

Unsupervised learning deals with unlabeled data, and the goal is to discover patterns or structures within the data.

5. What is reinforcement learning?

Reinforcement learning is a type of machine learning where an agent learns to make decisions by interacting with an environment and receiving rewards or penalties.

6. What is overfitting in machine learning?

Overfitting occurs when a model learns the training data too well and performs poorly on new, unseen data.

7. How can you prevent overfitting?

You can prevent overfitting by using techniques like cross-validation, regularization, and collecting more training data.

8. What is cross-validation?

Cross-validation is a technique used to assess the performance of a machine learning model by splitting the data into multiple subsets and training/testing the model on different combinations of these subsets

9. What are hyperparameters in machine learning?

Hyperparameters are settings that are not learned from the data but are set before training the model, such as learning rate or the number of hidden layers in a neural network.

10. Explain the bias-variance trade-off.

The bias-variance trade-off is a fundamental concept in machine learning. It refers to the balance between a model’s ability to fit the training data (low bias) and its ability to generalize to new, unseen data (low variance).

11. What is a decision tree?

A decision tree is a tree-like structure used for classification and regression tasks. It splits the data into subsets based on the values of input features and makes decisions at the leaf nodes.

12. What is a random forest?

A random forest is an ensemble learning method that combines multiple decision trees to improve predictive accuracy and reduce overfitting.

13. What is gradient descent?

Gradient descent is an optimization algorithm used to minimize the loss function in machine learning by iteratively adjusting model parameters in the direction of steepest descent.

14. What is deep learning?

Deep learning is a subfield of machine learning that focuses on neural networks with multiple layers (deep neural networks). It has been particularly successful in tasks like image and speech recognition.

15. Explain a neural network.

A neural network is a computational model inspired by the human brain, composed of layers of interconnected neurons that process and transform input data.

16. What is backpropagation?

Backpropagation is an algorithm used to train neural networks by adjusting the network’s weights based on the error between predicted and actual outputs.

17. What is a convolutional neural network (CNN)?

A CNN is a type of neural network designed for processing grid-like data, such as images and videos. It uses convolutional layers to automatically learn features from the input data.

18. What is a recurrent neural network (RNN)?

An RNN is a type of neural network used for sequential data, such as time series or natural language. It has recurrent connections that allow it to maintain a hidden state and process sequences.

19. What is the vanishing gradient problem?

The vanishing gradient problem occurs in deep neural networks when the gradients become too small during backpropagation, making it difficult to train deep models effectively.

20. What is transfer learning?

Transfer learning is a technique where a pre-trained model is adapted for a different but related task, saving time and resources compared to training from scratch.

21. What is clustering in unsupervised learning

Clustering is a technique used to group similar data points together based on their features. Common algorithms include K-means and hierarchical clustering.

22. What is the difference between classification and regression?

Classification is used to predict discrete labels or categories, while regression is used to predict continuous numeric values.

23. Explain the concept of bias in machine learning.

Bias in machine learning refers to the systematic error or unfairness that can occur when a model consistently makes inaccurate predictions for specific groups or demographics.

24. What is the confusion matrix in classification?

A confusion matrix is a table used to evaluate the performance of a classification model by comparing predicted and actual class labels, showing true positives, true negatives, false positives, and false negatives.

25. What is precision and recall in classification?

Precision measures the accuracy of positive predictions, while recall measures the ability of the model to correctly identify all positive instances.

26. What is F1-score?

The F1-score is a metric that combines precision and recall to provide a single measure of a model’s performance, balancing the trade-off between them.

27. What is AUC-ROC?

The AUC-ROC (Area Under the Receiver Operating Characteristic Curve) is a metric used to assess the performance of binary classification models, measuring the area under the curve of the true positive rate vs. false positive rate.

28. What is the curse of dimensionality?

The curse of dimensionality refers to the challenges and problems that arise when working with high-dimensional data, such as increased computational complexity and the sparsity of data points.

29. What is feature engineering?

Feature engineering is the process of selecting and transforming relevant features from the raw data to improve a model’s performance.

30. What are outliers in data?

Outliers are data points that significantly differ from the majority of the data and can skew the results of machine learning models.

31. What is regularization?

Regularization is a technique used to prevent overfitting by adding a penalty term to the loss function that discourages large parameter values.

32. What is L1 and L2 regularization?

L1 regularization (Lasso) adds the absolute values of parameter weights to the loss function, encouraging sparsity. L2 regularization (Ridge) adds the squares of parameter weights.

33. What is cross-entropy loss?

Cross-entropy loss is a loss function used in classification tasks that measures the dissimilarity between predicted and actual probability distributions.

34. What is a support vector machine (SVM)?

An SVM is a supervised learning algorithm used for classification and regression tasks. It aims to find a hyperplane that best separates data into different classes.

35. What is a kernel in SVM?

A kernel in SVM is a function that computes the inner product between data points in a higher-dimensional space, allowing SVMs to handle non-linear separation.

36. What is a bias term in a machine learning model?

A bias term, also known as an intercept, is a constant value added to the weighted sum of input features in a machine learning model.

37. Explain the concept of underfitting.

Underfitting occurs when a model is too simple to capture the underlying patterns in the data, resulting in poor performance on both training and testing data.

38. What is feature scaling?

Feature scaling is the process of normalizing or standardizing input features to ensure that they have similar scales, preventing some features from dominating others during training.

39. What is the K-nearest neighbors (K-NN) algorithm?

K-NN is a simple machine learning algorithm used for classification and regression. It predicts the class or value of a data point by considering the K-nearest data points in the training set.

40. What is bagging and boosting in ensemble learning?

Bagging (Bootstrap Aggregating) involves training multiple models on different subsets of the data and combining their predictions. Boosting focuses on sequentially training models, giving more weight to previously misclassified data points.

41. Explain dimensionality reduction.

Dimensionality reduction techniques reduce the number of input features while preserving as much relevant information as possible. Common methods include Principal Component Analysis (PCA) and t-Distributed Stochastic Neighbor Embedding (t-SNE).

42. What is a bias-variance decomposition in machine learning?

Bias-variance decomposition is a way to analyze a model’s error by breaking it down into three components: bias squared, variance, and irreducible error.

43. What is the difference between bagging and boosting?

Bagging involves training multiple models independently and averaging their predictions to reduce variance. Boosting focuses on training models sequentially, giving more weight to instances that are misclassified.

44. What is a recommendation system?

A recommendation system is a machine learning application that provides personalized suggestions or recommendations to users based on their past interactions or preferences.

45. What is natural language processing (NLP)?

NLP is a field of artificial intelligence that focuses on the interaction between computers and human languages, enabling machines to understand, interpret, and generate human language.

46. What is a word embedding in NLP?

A word embedding is a technique used to represent words as dense vectors in a continuous vector space, capturing semantic relationships between words.

47. What is a recurrent neural network (RNN) used for in NLP?

RNNs are commonly used in NLP for tasks that involve sequential data, such as text generation, sentiment analysis, and machine translation.

48. What is a sequence-to-sequence model in NLP?

A sequence-to-sequence model is a type of neural network architecture used for tasks where the input and output are both sequences, such as machine translation or text summarization.

49. What is a Long Short-Term Memory (LSTM) network in NLP?

LSTM is a type of recurrent neural network designed to better capture long-range dependencies in sequential data, making it particularly effective for NLP tasks.

50. What is a word2vec model?

Word2Vec is a popular word embedding model that learns word vectors by predicting context words given a target word (Skip-gram) or predicting a target word given context words (CBOW).

51. Explain the concept of one-hot encoding.

One-hot encoding is a technique used to represent categorical data as binary vectors, where each category is encoded as a unique binary code.

52. What is transfer learning in NLP?

Transfer learning in NLP involves using pre-trained language models, like BERT or GPT, as a starting point for downstream tasks, such as text classification.

MACHINE LEARNING INTERVIEW QUESTIONS: INTERMEDIATE LEVEL

53. What is machine learning, and how does it differ from traditional programming?

– Machine learning is a subset of artificial intelligence that involves training algorithms to learn patterns from data, enabling them to make predictions or decisions without explicit programming. Traditional programming requires explicit rules, while in machine learning, algorithms learn from data and generalize patterns.

54. Explain the difference between supervised, unsupervised, and reinforcement learning.

- Supervised learning: In this paradigm, the algorithm is trained on labeled data, where input-output pairs are provided, and the goal is to learn a mapping from inputs to outputs.

- Unsupervised learning: Here, the algorithm learns patterns or structures within the data without labeled outputs, typically clustering or dimensionality reduction.

- Reinforcement learning: This involves an agent interacting with an environment and learning to make sequences of decisions to maximize a cumulative reward.

55. What is overfitting in machine learning, and how can it be prevented or mitigated?

– Overfitting occurs when a model learns the training data too well and performs poorly on unseen data. It can be prevented or mitigated by:

- Using more training data.

- Reducing the complexity of the model.

- Applying regularization techniques like L1 or L2 regularization.

- Using cross-validation to assess model performance.

56. What is bias-variance trade-off, and why is it important in machine learning?

The bias-variance trade-off is a fundamental concept in machine learning. It refers to the balance between two types of errors:

Bias: Error due to overly simplistic assumptions in the learning algorithm (underfitting).

Variance: Error due to excessive complexity, leading to sensitivity to noise in the training data (overfitting). Finding the right balance is crucial to building models that generalize

well to unseen data.

57. What are some common distance metrics used in clustering algorithms?

Common distance metrics include:

- Euclidean distance

- Manhattan distance

- Cosine similarity

- Mahalanobis distance

58. Explain the curse of dimensionality and its implications in machine learning.

The curse of dimensionality refers to the problems and challenges that arise when working with high-dimensional data. It leads to sparsity of data, increased computational requirements, and the need for more data to maintain model performance. Techniques like dimensionality reduction can help mitigate these issues.

59. What is feature engineering, and why is it important in machine learning?

Feature engineering is the process of selecting, transforming, or creating relevant features from raw data to improve model performance. It is crucial because the quality of features often has a more significant impact on model performance than the choice of the learning algorithm.

60. Explain the concept of cross-validation.

Cross-validation is a technique used to assess the performance of a machine learning model. It involves dividing the dataset into multiple subsets (e.g., k-folds), training the model on k-1 of these subsets and testing it on the remaining one. This process is repeated k times, and the results are averaged to obtain a more robust estimate of the model’s performance.

61. What is regularization in machine learning, and why is it useful?

Regularization is a technique used to prevent overfitting by adding a penalty term to the loss function. It encourages the model to have smaller weights or coefficients,making it less likely to fit the noise in the data. Common regularization methods include L1 (Lasso) and L2 (Ridge) regularization.

62. Describe the ROC curve and AUC.

The Receiver Operating Characteristic (ROC) curve is a graphical representation of a binary classification model’s performance. It plots the true positive rate (TPR) against the false positive rate (FPR) at different threshold values. The Area Under the ROC Curve (AUC) measures the overall performance of the model, with a higher AUC indicating better discrimination between classes.

63. What is the purpose of hyperparameter tuning, and how can it be done effectively?

– Hyperparameter tuning involves finding the optimal settings for hyperparameters (e.g., learning rate, regularization strength) to improve a model’s performance. It can be done effectively through techniques like grid search, random search, or Bayesian optimization.

64. Explain the concept of gradient descent and its variants in optimization.

Gradient descent is an optimization algorithm used to minimize the loss function in machine learning. It involves iteratively adjusting model parameters in the direction of the steepest descent of the loss function. Variants of gradient descent include Stochastic Gradient Descent (SGD), Mini-batch Gradient Descent, and Adam, which optimize the convergence speed and stability of the algorithm.

65. What is the bias-variance decomposition of the mean squared error (MSE)?

The MSE can be decomposed into three components: – Bias^2: The squared difference between the expected prediction and the true value. – Variance: The variability of predictions for different training sets. – Irreducible error: The inherent noise in the data. The sum of these components equals the MSE.

66. What is the purpose of cross-entropy loss in classification problems?

Crossentropy loss (log loss) is commonly used in classification problems to measure the dissimilarity between predicted class probabilities and true class labels. It encourages the model to assign higher probabilities to the correct class and penalizes incorrect predictions.

67. What are decision trees, and how do they handle categorical and numerical features differently?

Decision trees are a type of supervised learning model that can be used for classification and regression tasks. They handle categorical features by splitting the data based on category labels and handle numerical features by selecting thresholds to split the data into two subsets.

68. Explain bagging and boosting ensemble techniques.

Bagging (Bootstrap Aggregating): Bagging involves training multiple base models (e.g., decision trees) on random subsets of the training data with replacement. It reduces variance and can improve model stability. – Boosting: Boosting sequentially trains weak learners, giving more weight to misclassified samples in each iteration. Popular algorithms include AdaBoost and Gradient Boosting, which combine the predictions of multiple weak learners to create a strong ensemble model.

69. What is the K-nearest neighbors (K-NN) algorithm, and how does it work?

KNN is a non-parametric algorithm used for classification and regression tasks. It works by finding the K nearest data points in the training set to a given test point and then making predictions based on the majority class (classification) or the mean value (regression) of those K neighbors.

70. Explain the bias-variance trade-off in the context of ensemble methods.

Ensemble methods, like random forests and gradient boosting, aim to reduce model variance by combining multiple base models. They can achieve a lower bias and better generalization by aggregating predictions from different models. However, if the base models are highly biased, ensembling them may not improve performance significantly.

71. What is the curse of dimensionality, and how does it affect clustering algorithms?

The curse of dimensionality refers to the challenges that arise when working with high-dimensional data. In clustering, high dimensionality can lead to increased computational complexity and the need for larger datasets to maintain clustering quality. It may also result in clusters becoming sparse and less meaningful in high-dimensional spaces.

72. Explain the concept of imbalanced datasets in classification and methods to handle them.

Imbalanced datasets occur when one class significantly outnumbers the other(s). To handle them: – Resampling: Oversample the minority class or undersample the majority class. – Synthetic data generation: Create synthetic examples for the minority class (e.g., SMOTE). – Anomaly detection techniques. – Cost-sensitive learning: Assign different misclassification costs to different classes.

73. What is the purpose of dimensionality reduction, and what are some common techniques for it?

Dimensionality reduction aims to reduce the number of features while preserving important information. Common techniques include Principal Component Analysis (PCA) for numerical data and t-Distributed Stochastic Neighbor Embedding (t-SNE) for visualization. For text data, techniques like Term FrequencyInverse Document Frequency (TF-IDF) and Word Embeddings (e.g., Word2Vec) can be used.

74. Explain the concept of bias in machine learning algorithms and its impact on model predictions.

– Bias in machine learning refers to the systematic error or deviation of model predictions from the true values. High bias can result in underfitting, where the model is too simple to capture underlying patterns in the data. Reducing bias often involves increasing model complexity or using more expressive models.

75. What is the difference between precision and recall, and how are they related to false positives and false negatives?

Precision: Measures the accuracy of positive predictions and is the ratio of true positives to the sum of true positives and false positives.

Recall: Measures the ability of the model to identify all relevant instances and is the ratio of true positives to the sum of true positives and false negatives. – They are related in that increasing one generally leads to a decrease in the other, creating a precision-recall trade-off.

76. Explain the concept of one-hot encoding and its use in handling categorical variables.

One-hot encoding is a technique used to convert categorical variablesinto binary vectors. It creates a binary feature for each category and assigns a 1 to the corresponding category and 0 to all others. This allows machine learning models to work with categorical data.

77. What is the difference between a generative and a discriminative model?

Generative model: Models the joint probability distribution of both the input features and the target labels. It can be used for tasks like generating new data samples.

Discriminative model: Models the conditional probability of the target labels given the

input features. It is typically used for classification tasks.

78. Explain the bias-variance trade-off in the context of model complexity.

– The bias-variance trade-off in model complexity refers to the balance between a model’s ability to fit the training data (low bias) and its ability to generalize to unseen data (low variance). As model complexity increases, bias decreases, but variance increases, potentially leading to overfitting.

79. What is a support vector machine (SVM), and how does it work for classification tasks?

A Support Vector Machine (SVM) is a supervised learning algorithm used for binary classification. It works by finding the hyperplane that maximizes the margin between classes while minimizing classification error. SVMs can handle both linear and non-linear data by using different kernel functions.

80. Explain the purpose of dropout in neural networks.

– Dropout is a regularization technique used in neural networks to prevent overfitting. It randomly drops (deactivates) a fraction of neurons during each training iteration. This forces the network to learn more robust features and reduces reliance on specific neurons, improving generalization.

81. What is the vanishing gradient problem in deep learning, and how can it be mitigated?

The vanishing gradient problem occurs during the training of deep neural networks when gradients become very small, leading to slow convergence or getting stuck in local minima. It can be mitigated by using activation functions like ReLU, batch normalization, gradient clipping, or using skip connections (e.g., in ResNet architectures).

82. Explain the concept of transfer learning in deep learning.

Transfer learning is a technique where a pre-trained neural network model is adapted for a different but related task. The pre-trained model’s learned features and weights can be fine-tuned on a smaller dataset for the specific target task, saving training time and often leading to better performance.

83. What is the purpose of a confusion matrix, and how is it used to evaluate classification models?

– A confusion matrix is a table that summarizes the performance of a classification model by showing the counts of true positives, true negatives, false positives, and false negatives. It is used to calculate metrics like accuracy, precision, recall, F1-score, and the ROC curve.

84. Explain the concept of bias in machine learning algorithms and how it can affect model predictions.

Bias in machine learning refers to systematic errors in predictions caused by a model’s inability to capture underlying patterns in the data. High bias can lead to underfitting, where the model is too simplistic, resulting in poor performance on both training and test data.

85. What is the difference between L1 and L2 regularization, and when should each be used?

– L1 regularization (Lasso): Adds the absolute values of coefficients as a penalty to the loss function. It encourages sparsity and is useful when feature selection is important. – L2 regularization (Ridge): Adds the squared values of coefficients as a penalty. It tends to distribute the weight among all features and is useful for reducing the impact of multicollinearity.

86. What is a neural network activation function, and why is it necessary?

An activation function introduces non-linearity to the output of a neural network node. It enables neural networks to model complex relationships in data. Common activation functions include ReLU, sigmoid, and tanh.

87. Explain the bias-variance trade-off in the context of model selection.

In the context of model selection, the bias-variance trade-off refers to the balance between the simplicity and complexity of a model. Simple models (low complexity) may have high bias but low variance, while complex models (high complexity) may have low bias but high variance. The goal is to find a model that minimizes both bias and variance for optimal generalization.

88. What is the purpose of the learning rate in gradient descent, and how does it affect training?

The learning rate in gradient descent controls the size of the steps taken during each iteration of parameter updates. A high learning rate can cause the algorithm to converge quickly but may overshoot the optimal solution or even diverge. A low learning rate may converge slowly but is less likely to overshoot. Finding an appropriate learning rate is crucial for efficient training.

89. Explain the difference between batch gradient descent, mini-batch gradient descent,and stochastic gradient descent

– Batch Gradient Descent: Computes the gradient of the loss function using the entire training dataset in each iteration. It can be slow for large datasets. – Mini-batch Gradient Descent: Divides the training dataset into smaller batches and updates the parameters using one batch at a time. It balances computational efficiency and convergence speed. – Stochastic Gradient Descent (SGD): Updates the parameters using only one randomly selected training example at a time. It can converge quickly but may have noisy updates.

90. What is dropout, and how does it help prevent overfitting in neural networks?

Dropout is a regularization technique used in neural networks to reduce overfitting. During training, dropout randomly deactivates a fraction of neurons in each layer, effectively creating a different network for each training iteration. This prevents the network from relying too heavily on specific neurons and encourages robust feature learning.

91. Explain the concept of precision-recall trade-off in classification and its implications.

The precision-recall trade-off is a relationship between precision and recall in classification tasks. Increasing precision typically leads to a decrease in recall and vice versa. This trade-off means that a model can be tuned to be more conservative (high precision) or more inclusive (high recall) depending on the problem’s requirements.

92. What is the curse of dimensionality, and how does it impact machine learning algorithms?

The curse of dimensionality refers to the challenges that arise when working with high-dimensional data. It can lead to sparsity of data points, increased computational complexity, and the need for more data to maintain model performance. Many machine learning algorithms struggle in high-dimensional spaces, making dimensionality reduction techniques necessary.

93. Explain the purpose of a confusion matrix and how it is used to evaluate classification models.

A confusion matrix is a table that summarizes the performance of a classification model. It shows the counts of true positives, true negatives, false positives, and false negatives. It is used to calculate various evaluation metrics such as accuracy, precision, recall, F1-score, and to assess the model’s overall classification performance.

94. What is a ROC curve, and how is it used to evaluate binary classification models?

A Receiver Operating Characteristic (ROC) curve is a graphical representation of a binary classification model’s performance. It plots the true positive rate (sensitivity) against the false positive rate (1-specificity) at various threshold values. The area under the ROC curve (AUC) is a common metric used to compare and evaluate models, with a higher AUC indicating better discrimination between classes.

95. What is bagging, and how does it improve the performance of machine learning models?

Bagging (Bootstrap Aggregating) is an ensemble learning technique that involves training multiple base models on random subsets of the training data with replacement. It improves model performance by reducing variance, which can lead to better generalization. The combination of predictions from multiple models tends to be more robust and less prone to overfitting.

96. Explain the bias-variance trade-off in the context of ensemble methods.

– In the context of ensemble methods, the bias-variance trade-off refers to the balance between bias and variance in the individual base models and the overall ensemble. Ensemble methods, such as random forests or boosting, combine multiple base models. If the base models have low bias (i.e., they fit the training data well), the ensemble may have higher variance, potentially leading to overfitting. Conversely, if the base models have high bias, the ensemble may have low variance but may underfit the data.

97. What is the purpose of cross-validation in machine learning, and how is it performed?

Cross-validation is a technique used to assess the performance of a machine learning model by splitting the dataset into multiple subsets (folds). The model is trained and evaluated multiple times, with each fold serving as the validation set while the others are used for training. Cross-validation provides a more robust estimate of a model’s performance and helps identify issues like overfitting or underfitting.

98. Explain the difference between precision and recall, and why are they important in classification tasks?

Precision: Measures the accuracy of positive predictions and is the ratio of true positives to the sum of true positives and false positives. It quantifies how many of the predicted positive instances are actually true positives. – Recall: Measures the ability of the model to identify all relevant instances and is the ratio of true positives to the sum of true positives and false negatives. It quantifies how many of the true positive instances were successfully retrieved by the model. – Precision and recall are important in classification tasks because they provide a more nuanced understanding of a model’s performance beyond accuracy, especially when dealing with imbalanced datasets or situations where one type of error is more critical than the other.

99. Explain the concept of regularization in machine learning, and how does it work?

Regularization is a technique used to prevent overfitting in machine learning models. It involves adding a penalty term to the loss function that discourages overly complex models. Two common types of regularization are:

L1 Regularization (Lasso): Adds the absolute values of coefficients as a penalty, encouraging some coefficients to be exactly zero, effectively performing feature selection.

L2 Regularization (Ridge): Adds the squared values of coefficients as a penalty, distributing the weight more evenly among features and reducing their magnitudes.

100. What is the bias-variance trade-off in machine learning, and why is it important?

The bias-variance trade-off is a fundamental concept in machine learning. It refers to the balance between two types of errors in a model: – Bias: Error due to overly simplistic assumptions in the learning algorithm, leading to underfitting. – Variance: Error due to excessive complexity in the model, leading to overfitting. Striking the right balance between bias and variance is crucial because models that are too simple may not capture important patterns in the data, while overly complex models may fit noise and generalize poorly to new data.

101. What are hyperparameters in machine learning, and how are they different from model parameters?

– Hyperparameters are settings or configurations that are not learned from the data but are set before the training process. They determine the overall behavior of the machine learning algorithm, such as learning rate, regularization strength, and the choice of the model architecture. – Model parameters are learned from the training data and represent the internal variables or weights that the algorithm adjusts during training to make predictions.

102. Explain the concept of gradient descent and its variants used in optimization.

– Gradient descent is an optimization algorithm used to minimize a loss function in machine learning. It works by iteratively adjusting model parameters in the direction of the steepest descent.

MACHINE LEARNING INTERVIEW QUESTIONS: ADVANCE LEVEL

103. Explain the Bias-Variance Tradeoff in machine learning.

The bias-variance tradeoff is a fundamental concept in machine learning. It represents the balance between two sources of error in predictive models. High bias (underfitting) occurs when a model is too simple to capture the underlying patterns in the data. High variance (overfitting) happens when a model is overly complex and fits noise in the data. The goal is to find the right level of complexity that minimizes both bias and variance to achie ve the best predictive performance.

104. What is regularization, and why is it important in machine learning?

– Regularization is a technique used to prevent overfitting in machine learning models. It adds a penalty term to the loss function that discoura ges the model from learning overly complex patterns from the data. Common regularization techniques include L1 (Lasso) and L2 (Ridge) regularization. L1 regularization encourages sparsity in feature selection, while L2 regularization penalizes large weight values. Regularization helps improve the model’s generalization ability.

105. Explain the concept of cross-validation.

Cross-validation is a method for assessing a machine learning model’s performance and generalization ability. It involves splitting the dataset into multiple subsets (e.g., k-folds), training the model on some of these subsets (training data), and evaluating its performance on the remaining subset (validation data). This process is repeated multiple times, with different subsets used as validation data each time. Cross-validation helps provide a more robust estimate of a model’s performance and helps detect issues like overfitting.

106. What is the difference between supervised and unsupervised learning?

Supervised learning: In supervised learning, the model is trained on a labeled dataset, where the input data is associated with corresponding output labels. The goal is to learn a mapping from input to output, making it suitable for tasks like classification and regression.

Unsupervised learning: Unsupervised learning deals with unlabeled data. The goal is to discover patterns or structure within the data without predefined output labels. Common tasks include clustering (grouping similar data points) and dimensionality reduction (reducing the number of features while preserving important information).

107. What are some common techniques for handling imbalanced datasets in classification problems?

Dealing with imbalanced datasets is crucial in classification tasks where one class significantly outnumbers the other(s). Some common techniques include:

- Resampling: Oversampling the minority class (adding more instances of the minority class) or undersampling the majority class (reducing the number of majority class instances).

- Synthetic data generation: Techniques like SMOTE (Synthetic Minority Over-sampling Technique) generate synthetic examples of the minority class to balance the dataset.

- Cost-sensitive learning: Assigning different misclassification costs to different classes, making the model more sensitive to the minority class.

- Ensemble methods: Using ensemble techniques like Random Forest or Gradient Boosting that inherently handle imbalanced data.

108. What is transfer learning, and how does it benefit deep learning models?

Transfer learning is a technique in deep learning where a pre -trained model, typically trained on a large dataset for a related task, is used as a starting point for a new, more specific task. It benefits deep learning models by leveraging the knowledge gained from the pre-trained model’s feature extraction layers. This approach reduces the need for massive amounts of data and computation, making it particularly useful for tasks with limited data. Fine-tuning can be performed on the pre-trained model’s layers to adapt it to the new task.

109. Explain the concept of hyperparameter tuning and some methods for performing it.

Hyperparameter tuning involves finding the optimal values for hyperparameters (settings not learned from the data) to improve a model’s performance. Some methods for hyperparameter tuning include:

- Grid Search: Exhaustively searching through a predefined set of hyperparameter combinations.

- Random Search: Randomly sampling hyperparameter combinations from predefined distributions.

- Bayesian Optimization: Using probabilistic models to predict which hyperparameters are likely to result in better performance and selecting them accordingly.

- AutoML tools: Automated Machine Learning (AutoML) tools can automatically search for the best hyperparameters and even select the best model architecture.

110. What are some challenges in deploying machine learning models to production?

Deploying machine learning models to production presents several challenges, including:

- Scalability:: Ensuring the model can handle large volumes of real-time data efficiently.

- Monitoring:: Implementing mechanisms to monitor model performance and drift over time.

- Security:: Protecting the model from attacks like adversarial inputs and ensuring data privacy.

- Versioning:: Managing different versions of models and their associated data preprocessing pipelines.

- Deployment infrastructure:: Selecting the right infrastructure, whether it’s on-premises or cloud-based, and optimizing it for model inference.

111. Explain the concept of a neural network’s activation function.

Activation functions introduce non-linearity into neural networks, allowing them to learn complex mappings. Common activation functions include:

- ReLU (Rectified Linear Unit): f(x) = max(0, x). It is the most widely used due to its simplicity and effectiveness.

- Sigmoid: f(x) = 1 / (1 + e^(-x)). It squashes input values into the range (0, 1) and is often used in binary classification.

- Tanh (Hyperbolic Tangent): f(x) = (e^(x) – e^(-x)) / (e^(x) + e^(-x)).Similar to the sigmoid, it squashes input values into the range (-1, 1).

112. What is the vanishing gradient problem, and how can it be mitigated in deep neural networks?

The vanishing gradient problem occurs during backpropagation in deep neural networks when gradients become very small as they are propagated backward through many layers. This can slow down or prevent training. To mitigate this problem, techniques such as:

- :Weight Initialization:: Proper initialization of weights, like He initialization, helps prevent gradients from vanishing or exploding.

- Batch Normalization:: Normalizing activations within each layer helps stabilize training by reducing internal covariate shift.

- Skip Connections:: Architectures like ResNet use skip connections to allow gradients to flow more easily through the network.

- Activation Functions:: Choosing appropriate activation functions like ReLU can also help alleviate the vanishing gradient problem.

113. What is overfitting, and how can it be prevented or mitigated?

Overfitting occurs when a machine learning model learns the training data too well, capturing noise and random fluctuations rather than the underlying patterns. This leads to poor generalization to unseen data. To prevent or mitigate overfitting, you can:

- Use more training data: A larger dataset can help the model learn the true underlying patterns.

- Feature selection: Select relevant features and remove irrelevant ones to reduce the complexity of the model.

- Cross-validation: Implement k-fold cross-validation to assess the model’s performance on multiple subsets of the data.

- Regularization: Apply techniques like L1 or L2 regularization to penalize complex models.

- Use simpler models: Choose simpler algorithms or reduce the complexity of the model architecture.

- Ensemble methods: Combine multiple models to reduce overfitting, as ensemble models are less prone to capturing noise.

114. Explain the concept of kernel functions in support vector machines (SVM). What is their role, and what types of kernel functions are commonly used?

Kernel functions in SVM allow SVMs to operate in a higher-dimensional feature space without explicitly transforming the data into that space. The kernel function computes the dot product between the transformed feature vectors in the higher-dimensional space, which is computationally efficient.

Commonly used kernel functions include:

- Linear Kernel: Used for linearly separable data.

- Polynomial Kernel: Used for non-linear data, with a parameter ‘d’ to control the degree of the polynomial.

- Radial Basis Function (RBF) Kernel: Suitable for non-linear and complex data, with parameters ‘γ’ (gamma) controlling the kernel’s shape.

- Sigmoid Kernel: Useful for binary classification problems.

The choice of kernel function depends on the data’s nature, and selecting the right kernel is crucial for SVM performance.

115. Explain the difference between bagging and boosting. Provide examples of algorithms for each.

Bagging (Bootstrap Aggregating) and Boosting are both ensemble techniques, but they differ in their approach:

- Bagging: Bagging involves training multiple independent models on bootstrapped (randomly resampled with replacement) subsets of the training data and averaging their predictions. This reduces variance and can improve model stability. Example algorithms include Random Forest and Bagged Decision Trees.

- Boosting: Boosting, on the other hand, focuses on iteratively training models and giving more weight to instances that the previous models misclassified. This helps to correct errors and improve overall model performance. Examples include AdaBoost, Gradient Boosting, and XGBoost.

116. What are autoencoders, and how are they used in unsupervised learning and dimensionality reduction?

Autoencoders are a type of neural network used for unsupervised learning and dimensionality reduction. They consist of an encoder and a decoder. The encoder compresses the input data into a lower-dimensional representation (encoding), and the decoder reconstructs the original data from this encoding.

Autoencoders have various applications:

- Dimensionality Reduction: By training an autoencoder to learn a compressed representation of data, you can reduce its dimensionality while retaining important features.

- Anomaly Detection: Autoencoders can identify anomalies by reconstructing data; instances that deviate significantly from their reconstructions are likely anomalies.

- Data Denoising: Autoencoders can be trained to remove noise from data by learning to reconstruct the clean version from noisy inputs.

- Image Compression: Autoencoders are used in image compression by learning efficient representations of images.

Autoencoders are versatile and can be adapted to various unsupervised learning tasks.

117. Explain the bias-variance trade-off in machine learning. How can you strike a balance between them?

The bias-variance trade-off refers to the challenge of finding the right level of model complexity. High bias (underfitting) occurs when a model is too simple and cannot capture the underlying patterns in the data. High variance (overfitting) happens when a model is too complex and fits the noise in the data. To strike a balance, one can use techniques like cross -validation, regularization, and ensemble methods. Cross-validation helps to tune model complexity, regularization adds a penalty for complexity, and ensemb le methods combine multiple models to reduce variance.

118. What are the key differences between Bagging and Boosting?

– Bagging (Bootstrap Aggregating) and Boosting are ensemble techniques.

- Bagging: In Bagging, multiple base models (usually of the same type) are trained independently on bootstrapped samples of the data.Predictions are combined by averaging (for regression) or voting (for classification), which reduces variance. Random Forest is a popular example.

- Boosting: In Boosting, base models are trained sequentially, and each model focuses on correcting the errors of the previous one. Examples include AdaBoost, Gradient Boosting, and XGBoost. Boosting aims to reduce bias and variance, often achieving better performance compared to Bagging.

119. Explain the differences between L1 regularization and L2 regularization. When would you use each?

- L1 Regularization (Lasso): It adds the absolute values of the coefficients as a penalty term to the loss function. L1 regularization encourages sparsity, meaning it tends to set some coefficients to exactly zero. This is useful when you suspect that only a subset of features is relevant, effectively performing feature selection.

- L2 Regularization (Ridge): It adds the squared values of the coefficients as a penalty term to the loss function. L2 regularization encourages small but non-zero values for all coefficients. It helps in preventing multicollinearity and is generally used when you believe that most features are relevant but should be weighted appropriately.

Use L1 when feature selection is crucial or when you want a sparse model,and use L2 when you want to avoid multicollinearity and maintain all features’contributions.

120. Explain the concept of word embeddings and the difference between Word2Vec and GloVe.

Word embeddings are vector representations of words in a continuous space, capturing semantic relationships between words. Word2Vec and GloVe are two popular methods for creating word embeddings:

Word2Vec: Word2Vec models, such as Continuous Bag of Words (CBOW)and Skip-gram, learn word embeddings by predicting words in context.CBOW predicts a target word from its context words, while Skip -gram predicts context words from a target word. Word2Vec embeddings are context-based and are good at capturing syntactic and semantic relationships.

GloVe (Global Vectors for Word Representation): GloVe, on the other hand, uses global word co-occurrence statistics to learn word embeddings. It focuses on capturing the global meaning of words and is often considered more memory-efficient and scalable for large corpora.

121. What is the vanishing gradient problem in deep learning, and how can it be mitigated?

– The vanishing gradient problem occurs when gradients in deep neural networks become extremely small during backpropagation. This makes it difficult to update the weights of early layers, resulting in slow convergence and poor training. To mitigate this problem:

- Activation Functions: Replace sigmoid or tanh activations with ReLU (Rectified Linear Unit) or its variants like Leaky ReLU, which do not saturate for positive inputs.

- Weight Initialization: Use proper weight initialization techniques like He initialization for ReLU activations to avoid initializing weights too small.

- Batch Normalization: Apply batch normalization layers to normalize the inputs within each mini-batch, reducing internal covariate shift and stabilizing gradients.

- Skip Connections: Implement skip connections or residual connections in deep networks (e.g., in ResNet) to allow gradients to flow more easily.

122. Explain the concept of gradient vanishing and exploding in deep learning. How can you mitigate these issues?

Gradient vanishing occurs when gradients in a deep neural network become extremely small during backpropagation, making it difficult to update the weights effectively. Gradient exploding is the opposite, where gradients become too large and lead to instability. To mitigate these issues, you can:

Use activation functions that mitigate vanishing gradients, such as ReLU, Leaky ReLU, or variants like Parametric ReLU (PReLU).

Implement gradient clipping to limit the gradient values during training. Use batch normalization to stabilize gradients and spe ed up training. Carefully initialize network weights using techniques like He initialization or

Xavier initialization.

123. What are Generative Adversarial Networks (GANs), and how do they work?

GANs are generative models that consist of two neural networks: a generator and a discriminator. The generator tries to generate data that is similar to real data, while the discriminator tries to distinguish between real and generated data. They are trained simultaneously through a minimax game. The generator aims to minimize the discriminator’s ability to distinguish fake from real data, while the discriminator tries to maximize its accuracy. Over time, this adversarial process leads to the generator producing increasingly realistic data.

124. Explain Transfer Learning and its applications in machine learning.

Transfer Learning is a technique where a pre-trained neural network,trained on a large dataset, is adapted for a different but related task. Instead of training a model from scratch, you use the knowledge and features learned by the pre-trained model as a starting point. Transfer Learning is applied in various ways:

- Fine-tuning: You retrain some or all layers of the pre-trained model on the new task.

- Feature extraction: You use the pre-trained model as a feature extractor and build a new model on top.

- Domain adaptation: You adapt a model trained on one domain to perform well in a different domain.

- Few-shot learning: You use pre-trained models to handle tasks with very limited labeled data.

125. What is Reinforcement Learning, and how does it differ from supervised learning?

Reinforcement Learning (RL) is a type of machine learning where an agent learns to make sequences of decisions by interacting with an environment. The agent aims to maximize a cumulative reward signal over time. Key differences from supervised learning inc lude:

RL deals with sequential decision-making, while supervised learning deals with labeled input-output pairs.

In RL, the agent explores the environment to learn from its actions, while in supervised learning, the model is trained on a fixed dataset.

RL often uses trial-and-error learning, as it may not receive explicit feedback for every action.

126. Explain the differences between generative and discriminative models in machine learning. Provide examples of each.

– Generative models aim to model the joint probability distribution of the data and labels, while discriminative models focus on modeling the conditional probability of labels given the data.

- Generative Model Example: Gaussian Mixture Models (GMMs),which model the distribution of data points as a mixture of Gaussian distributions. Another example is the Generative Adversarial Network (GAN), which generates new data samples that resemble the training data.

- Discriminative Model Example: Logistic Regression, which models the probability of a data point belonging to a specific class given its features. Support Vector Machines (SVMs) are also discriminative models used for classification tasks.

127. Explain the concept of word embeddings, and why are they important in natural language processing (NLP)?

Word embeddings are vector representations of words in a continuous,lower-dimensional space. They capture semantic relationships between words and are crucial in NLP tasks because they enable the model to understand and work with words in a way that captures their meaning.

Word embeddings like Word2Vec, GloVe, and FastText are pre -trained on large corpora and can be used as feature vectors for various NLP tasks. They help improve the performance of models by providing a dense representation of words, enabling the model to generalize better, and capture semantic similarities and analogies.